Not everyone has 20/20 vision. If you’re displaying large amounts of text, it might be easier for your users to listen to your data. When something goes wrong, you might want to provide an audible cue that’s more informative than “beep.” For all of these tasks, the Microsoft TextToSpeech object is just what you need. Peter Vogel leads the conversation.

In all probability, you already have Microsoft’s text-to-speech software installed on your computer. This technology, when passed a string of text, reads (or speaks) the text back to you. I remember the first time that I heard text-to-speech and speech-recognition technology demonstrated on a personal computer. While impressive, it was like a dog walking on its hind legs—not because it was done well, but because you were surprised that it worked at all. Both text reading and speech recognition have come a very long way since then, to the point where Microsoft’s text-to-speech (TTS) technology is distributed for free and is very impressive.

As part of my interest in effective user-interface design, I’ve been spending more time looking at accessibility issues: making user interfaces work for users with less than optimal hearing, vision, and motor control. As a person who owns his second set of bifocals (and is too cheap to buy a 21″ monitor), I’ve mostly been focusing on issues around poor visibility. Blind users typically use screen readers to read a page back to them, for instance, and I’m developing some understanding about how to support screen-reading tools. Blind users are an extreme case, however. Many users with less than optimal sight require only occasional support for text reading.

Nor is text-to-speech technology only worthwhile for visually impaired users. Even for users with adequate sight, the ability to have large amounts of text fed back to them may be a benefit. And how many developers, when writing an error handling routine, really just wanted the application to yell “Don’t do that!” at the user? Alternatively, I know one book author who uses text-to-speech technology to have his words read back to him as part of his editing process.

Getting started with TTS

Getting information about Microsoft’s TTS isn’t easy. Some relevant information can be found with the documentation for Microsoft Agent (the Office Assistant and other annoying little pop-up creatures). The online entry point for Microsoft Agent documentation is at www.microsoft.com/msagent. If the TTS component isn’t installed on your computer (and it probably is—remember that you’ve already got the Office Assistant), you can download what documentation there is from here. To check to see if you have TTS installed, in Access go to your Tools | References list and look for the Microsoft Voice Text item.

There are actually two sets of downloads that you’ll need:

- The Agent Core Components

- Text-to-speech engines, which handle the translation from text in a particular language to a particular speaking voice

The core components that I downloaded came with a single text-to-speech engine, named Sam (I’m not making this up). As a Canadian citizen with two official languages, I also downloaded two additional text-to-speech engines:

- Lernout & Hauspie TTS3000 TTS Engine—French

- Lernout & Hauspie TTS3000 TTS Engine—British English

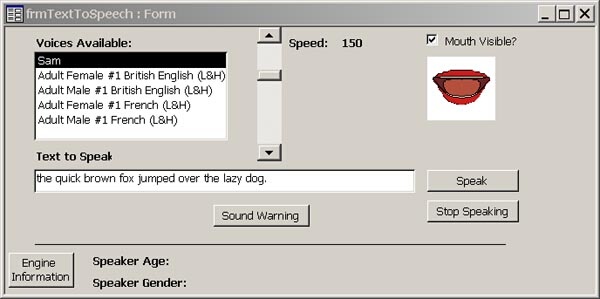

While Sam is a male speaker, the British and French engines include both a male and a female speaking voice. More languages are available on the Agent site, and other engines are available from third-party sources. The engines available on the Agent site have the advantage of being free.

Adding TTS to an application is simple:

- From the toolbox, click on the More Controls button.

- Scroll down through the list to the TextToSpeech Class entry.

- Click on the entry.

- Draw the control on your form.

You’ll end up with a blank white square on your form at design time. At runtime, you’ll get a pair of lips that faintly resembles the Rolling Stones’ logo (see Figure 1 and Figure 2). The resulting ActiveX control will be given the name TextToSpeech1 by Access, and I’ve used that name through the rest of this article.

Figure 1

Figure 2

Once the control is on your form, you’ll need to declare a variable to refer to it. I used this code at the top of my form’s code module to create a variable that would be available throughout my whole application:

Dim tts As HTTSLib.TextToSpeech

In my Form Load event, I used this code to set the variable to the TextToSpeech object inside the ActiveX control on my form:

Set tts = Me.TextToSpeech1.Object

To create the warning message I mentioned at the beginning of this article, all I needed to do was add this code that passes some text to the object’s Speak method:

tts.Speak ("Don't do that.")

It’s just a short step from this to putting a text box on your form and having the voice say whatever you type into it (the sample application included in the Download file will let you do this). However, passing a Null value to the Speak method raises an error, so you’ll need to check for that:

If Not IsNull(Me.txtSpeak) Then tts.Speak Me.txtSpeak Else tts.Speak "Nothing to say." End If

To stop a voice from speaking, you can use the StopSpeaking method:

tts.StopSpeaking

How good is the pronunciation? Good enough that family members who I tested this on were able to make out what the computer voice was saying. The translation isn’t character for character, either. Faced with “Elizabeth II,” “NASA,” and “FBI,” the TextToSpeech class produced “Elizabeth Second,” “NASA” (as a word), and “F, B, I” (as three separately sounded letters). Punctuation marks also counted, triggering the voice to insert pauses and inflections for commas, periods, and—under at least some circumstances—question marks. Exclamation marks never seemed to affect the voice, unfortunately. That would have added some emphasis to my warning message.

Configuring your voice

You have some ability to configure the voice used by TTS. For instance, as the Speak method runs, the lips on your screen move. This can look odd, but the appearance of the mouth does vary with what’s being said, providing feedback to the user on what’s being said. For instance, when asked to say “pop pop pop,” the upper teeth in the mouth appear between the animated lips. Asked to say “me me me,” the teeth don’t appear. “Joy joy joy” causes the lower teeth to appear. You can’t control the size of the lips, but if the animated lips don’t provide useful feedback for your user interface, you can make them invisible:

Me.TextToSpeech1.Visible = False

You can also control the speed of the voice. The unit of measure is “words per minute,” with 150 being a typical speaking pace. This code provides a very slow speaking voice:

tts.Speed = 50

If you set the number too high, the object either ignores the number or you trigger a “catastrophic failure” message (what’s considered “too high” seems to vary from engine to engine). I used this code to reset the speed if I triggered an error:

On Error Resume Next tts.Speed = Me.lblSpeed.Caption If Err.Number > 0 Then tts.Speed = 150 End If

The TextToSpeech object offers a variety of properties that describe the voice, including Age, Gender, and Speaker. However, most of these options are read-only and are controlled by which speech engine you choose to use. When the TextToSpeech class is loaded, it will build a list of speech engines installed on your computer. You control your voice by selecting a specific engine.

A count of the number of engines available can be found CountEngine property. For any property that’s dependent on the speech engine, you pass an integer value to the property to retrieve the setting for that engine. For instance, this code goes through the list of speech engines displaying the name of each engine (note that the first speech engine is at position 1, not position 0):

For ing = 1 To tts.CountEngines Debug.Print tts.ModeName(ing) Next

Picking the voice that you want to hear consists of selecting the engine that you want to use. The engine selection is done by passing the position of the engine in the engine list to the object’s Select method. This code selects the second engine:

tts.Select 2

As you might expect, choosing between the British and French voices results in very different pronunciations of the word “Bonjour” (the British engine pronounces it as two words: “Bawn Jer”). However, the choice of engines is more critical than that because different letter combinations mean different things to different speech engines.

For instance, the abbreviation “S.A.” is short for “Society Anonyme” in much of the world (a Society Anonyme is the equivalent of a limited company in North America). Asked to speak the abbreviation “S.A.” the Sam voice and the British voices sound out the letters; the French voice says “Society Anonyme.” The reverse is true for common North American abbreviations like “Ltd.”—the North American and English voices pronounce it “limited,” while the French voice sounds it out. Another common business abbreviation has more variations. When presented with “corp,” all the engines pronounce it as a word (with only the French engine giving what I’d regard as the correct pronunciation: “core”). With a period at the end to make “corp.” Sam said “corporation” and the other voices stuck with their original choice. With an “s” at the end to make “corps,” all the voices but Sam pronounced the word as “core” (Sam pronounced it as the plural of “corp,” a word that I don’t think actually exists). For each speech engine, you’ll find a Word document describing the engine’s behavior on the Microsoft Agent site.

Selecting engines

These variations being the case, you may want to check your input text and load an engine that will read it correctly. For instance, if you were pronouncing the names of the contact people in the Northwind’s Customer table, you might want to check the Country field and select the engine on that basis.

To select the right engine, you could keep track of what engine is loaded into what position, but that would require you to control which engines are installed on your user’s computer. A better method is to use the FindEngine method. This method accepts 28 parameters, all of them required. The first 14 allow you to specify the kind of speech engine that you’re looking for. Setting the tenth parameter, for instance, allows you to specify the gender of the speaker that you’re looking for (1 = female, 2 = male). The second set of 14 parameters allows you to specify a ranking for each of the parameters to indicate which parameters are more important to you. The FindEngine method returns the position of the engine that you’re looking for and can be used with the Select method.

In this code, I’m looking for a female voice of age 30. I’ve indicated that the gender is my No. 1 priority and age is my No. 2 priority:

tts.Select tts.FindEngine("", "", "", "", "", 0, _

"", "", "", 1, 30, 0, 0, 0, 0, 0, 0, 0, _

0, 0, 0, 0, 1, 2, 0, 0, 0, 0)

To check what engine you’ve selected, you can retrieve information about the currently selected engine from those read-only properties that I mentioned earlier. The first step in retrieving the information is to use the CurrentMode property to get the position of the engine currently being used. That information can be used with the properties controlled by the engine to retrieve information about the engine. This code determines the age of the current voice, for instance:

Dim intCurrentMode As Integer intCurrentMode = tts.CurrentMode Debug.Print tts.Age(intCurrentMode)

The engine isn’t perfect. I found, for instance, that TTS can get tongue-tied. Feeding the Speak method a name from the Northwind database with two accented “e” characters (“Frédérique Citeaux”) caused the engine to go into an endless loop, eventually consuming 98 percent of my CPU. This may be a result of the character set that’s used for non-North American characters on my computer—international keyboards and localized operating systems may bypass this problem.

In the meantime, my computer is talking to me. Got to go.